The Problem That's Plagued Robotics for Decades

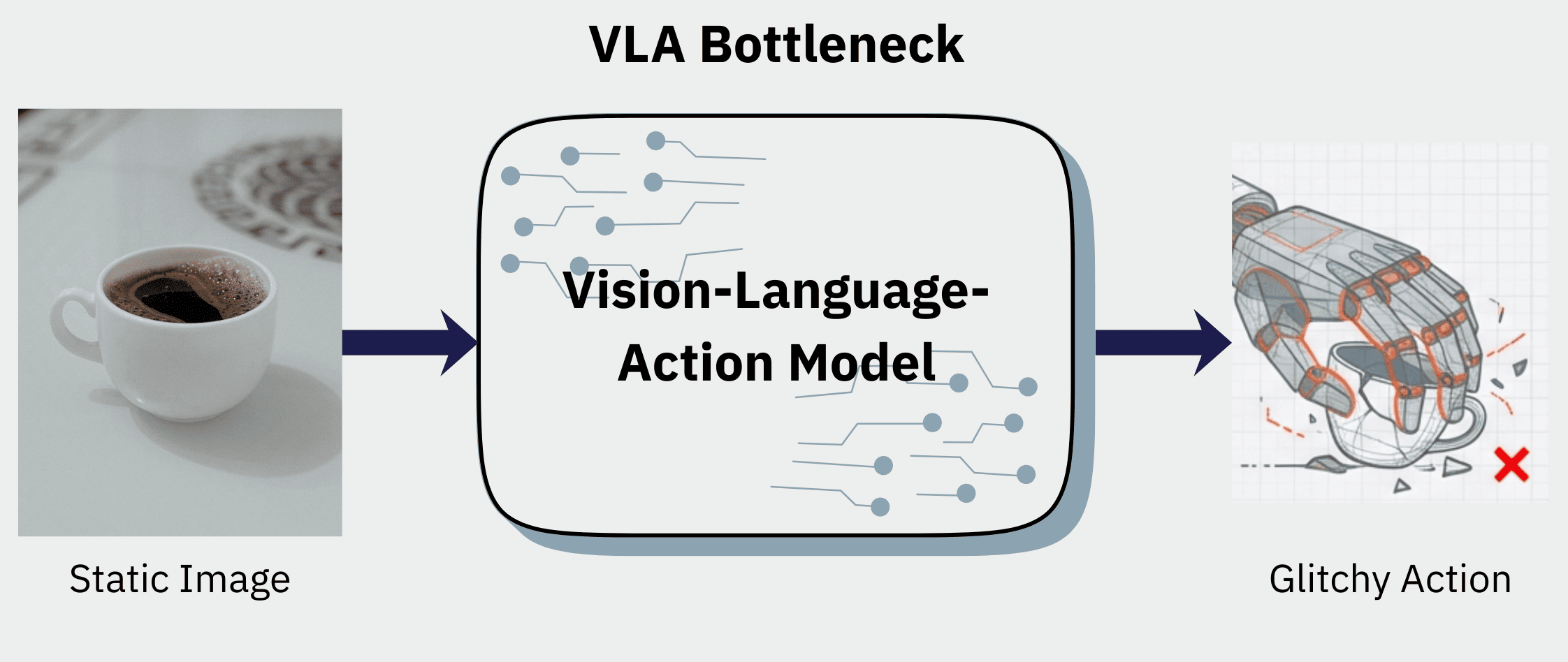

For decades, robotics has been haunted by a seemingly intractable problem: teaching a machine the common sense needed to perform simple, everyday tasks. The traditional path—laboriously collecting thousands of hours of expensive, custom robot data for every single skill—is a bottleneck that has kept truly helpful robots out of our homes. It's slow, costly, and utterly fails to scale to the near-infinite variety of the real world.

The Problem That's Plagued Robotics for Decades

But a new paradigm is finally breaking this logjam. Instead of being spoon-fed robot-specific instructions, a new generation of AI can learn general skills by leveraging the most abundant data source on the planet: internet video. By observing countless hours of human activity, a robot can develop a foundational understanding of physics and interaction, much like a person does.

This article explores the four most surprising takeaways from this revolutionary approach, as demonstrated by 1X's humanoid robot, NEO, and its groundbreaking World Model.

Takeaway 1: It Learns by "Imagining" the Task First

A Two-Step Process: Dream, then Do

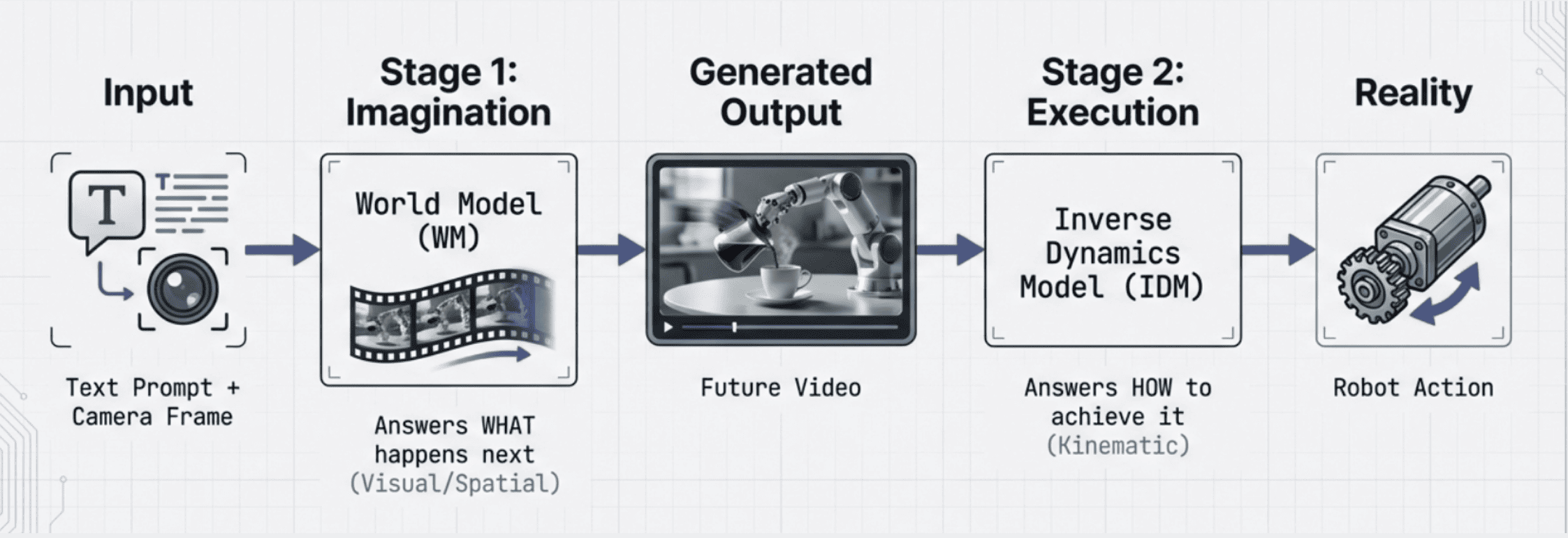

The fundamental shift is away from models that simply try to map a command directly to a robot's actions. Instead, 1X's World Model (1XWM) uses a two-step process that uncannily mirrors human cognition. Think of how you decide to pick up a glass: first, you picture the goal, and then your brain subconsciously coordinates the precise muscle movements to achieve it. NEO does something similar.

Step 1: Imagine a Successful Outcome

When given a text prompt like "pack this orange into the lunchbox," the World Model generates a short, physically plausible video from NEO's perspective. It literally visualizes, or "imagines," what a successful attempt looks like.

Step 2: Translate Imagination to Action

An Inverse Dynamics Model (IDM) then analyzes this generated video frame-by-frame. It acts as the robot's cerebellum, translating the visual plan into the exact sequence of physical motor commands NEO needs to execute in the real world.

1X World Model Pipeline

This means the robot isn't just mimicking; it's learning from a deep understanding of world dynamics derived from video. It's a system that can first dream of success and then work backward to make it a reality.

Takeaway 2: The "Humanoid Advantage" is the Secret Sauce

Why a Human-Like Body Changes Everything

The true unlock for this entire learning paradigm isn't just the AI model—it's the robot's human-like body. The World Model is pre-trained on an internet-scale dataset of egocentric human video, which implicitly encodes the structural priors of reality: how people move, apply force, and interact with objects.

This vast trove of human knowledge transfers directly to NEO because it is "kinematically and dynamically congruent with the human form." This isn't just a superficial resemblance. It means the robot's core interaction dynamics—its friction, inertia, and contact behavior—so closely match human motion that the model's learned priors remain valid. The physical similarity closes the "human-robot translation gap," allowing the robot to successfully perform the actions it visualizes because its body is built to perform them in a human-like way.

As the 1X team puts it in their analysis:

"What the model can visualize, NEO usually can do."

This principle underscores why humanoid morphology matters—it's not cosmetic, it's fundamental to successful transfer learning from human video.

Takeaway 3: It Can Perform Tasks It Has Never Seen Before

Generalizing from Human Experience

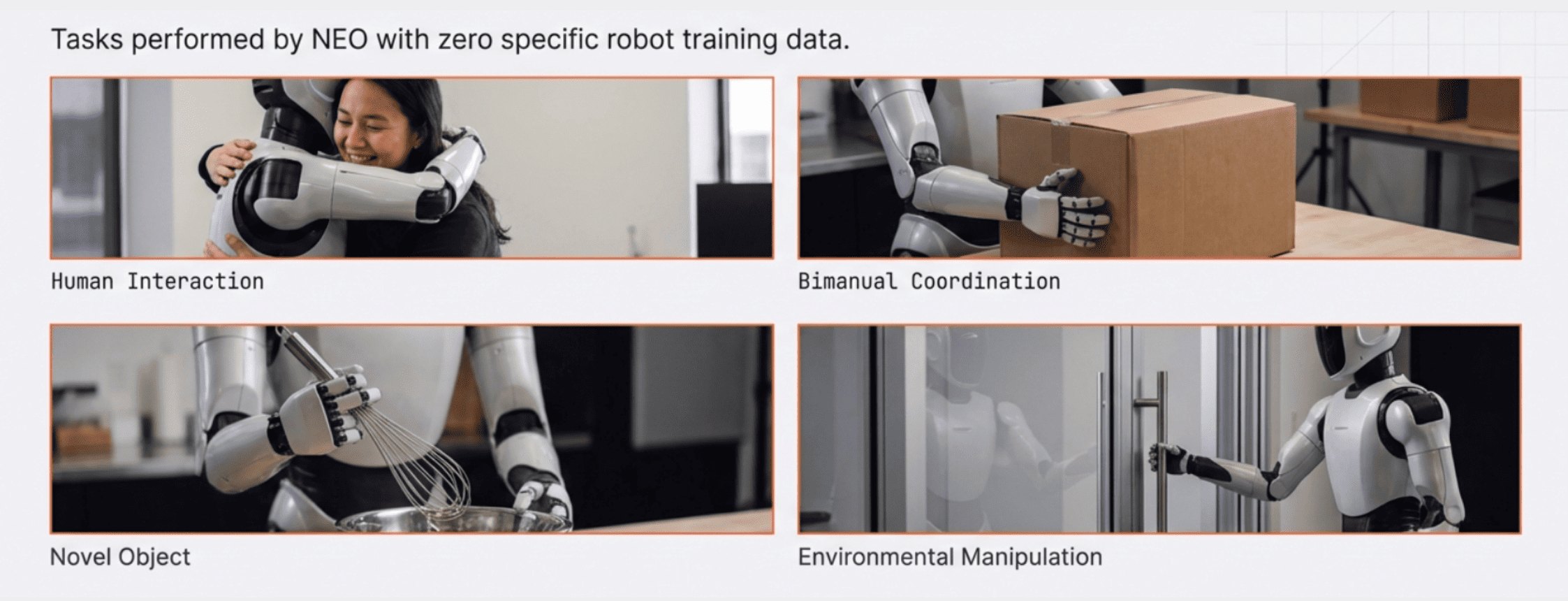

The most powerful result of this approach is the model's ability to generalize to completely novel situations. NEO's robot-specific training data is incredibly narrow. 98.5% of it consists of simple pick-and-place tasks, filtered for tabletop manipulation with hands in view. Despite this limitation, it can successfully perform entirely new behaviors.

NEO has demonstrated skills like:

- Two-handed coordination

- Scrubbing dishes

- Interacting with humans

- Operating a toilet seat (a task it has never seen before)

- Steaming a shirt

- Opening a sliding door

These abilities are absent from its robot training dataset, proving that this knowledge is being transferred from the general-purpose human video data. While some dextrous tasks like pouring or drawing remain challenging, many novel behaviors are already within reach.

Tasks performed by NEO

The Limitation: 3D Grounding

Of course, the system has limitations. The generated videos can sometimes be "overly optimistic" because the model's learning on 2D video can lead to weak 3D grounding. This means the real robot might undershoot or overshoot a target even when the imagined video looks perfect. But this is an engineering challenge, not a fundamental dead end.

Takeaway 4: Better Imagination Leads to Better Performance

Improving Success with More Computation

A fascinating connection has emerged: the visual quality of the generated video directly correlates with the real-world success rate of the task. If the model "imagines" a plausible, physically correct video, the robot is far more likely to succeed.

This insight led to a simple but profound experiment with a "pull tissue" task:

- Single video rollout: 30% success rate

- Eight video rollouts (best selected): 45% success rate

This finding is significant. It means robot performance can be improved at test-time with more computation, without any changes to the model or new training data. Simply by generating more "ideas" and picking the most plausible one, the robot becomes more capable. This opens the door to continuous improvement through computational scaling.

The Path Forward: A Flywheel for Self-Improvement

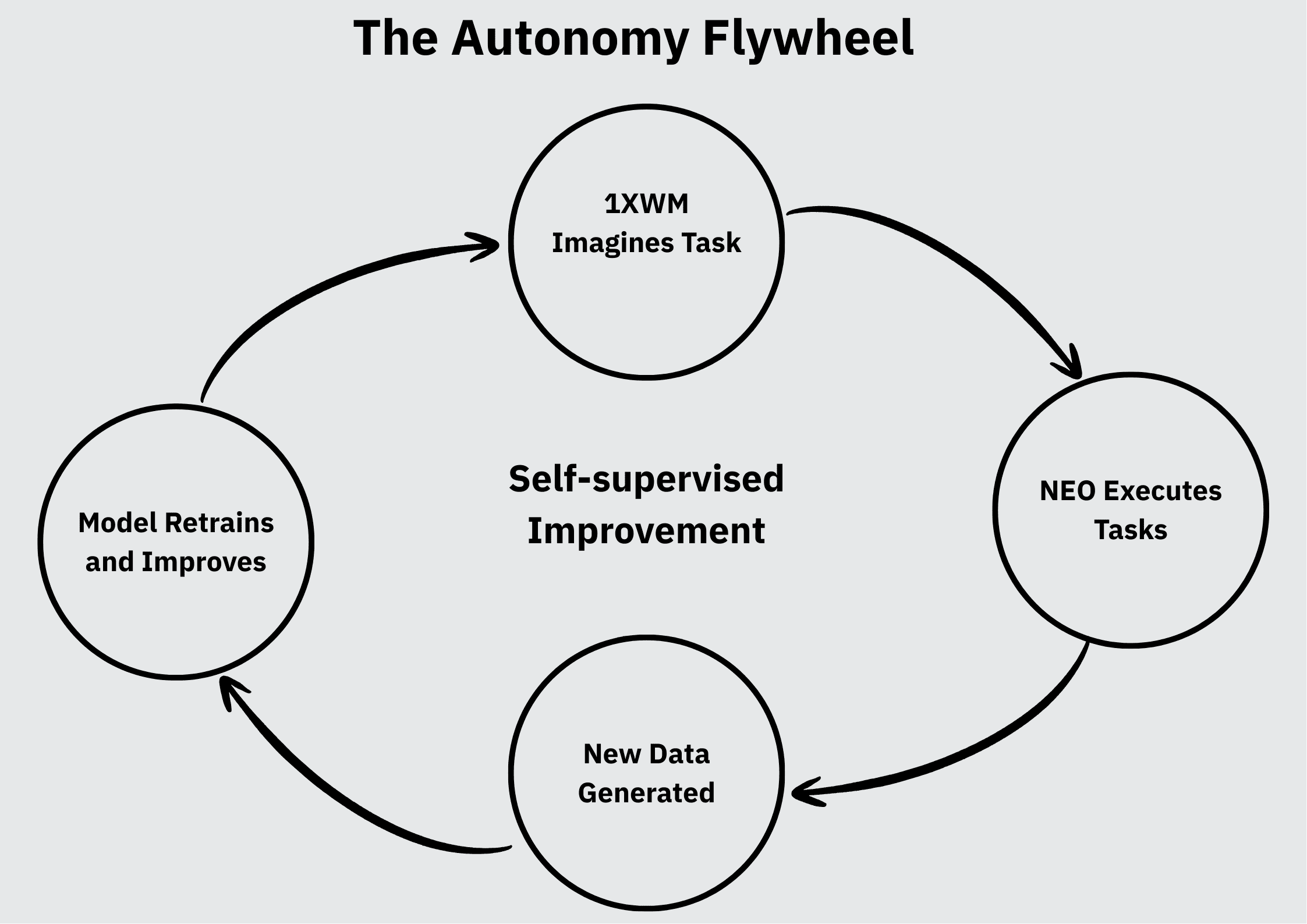

The 1X World Model represents a true paradigm shift in robotics and AI. Instead of being constrained by the Sisyphean task of robot data collection, it leverages the world's existing video data to build a foundational understanding of physics and action—an understanding then grounded in the real world through a humanoid body.

This creates a powerful "flywheel" effect:

- NEO attempts real-world tasks

- It generates unlimited new data from its own experiences (successes and failures)

- This data feeds back into the AI model to continuously refine performance

- Improved models enable more capable robots

The Autonomy Flywheel

While real-world engineering challenges remain—such as reducing the 11-second inference time and implementing closed-loop replanning for longer tasks—the path forward is clear. As the underlying video models improve, so too will NEO's capabilities, creating a virtuous cycle of self-improvement.

This represents a fundamental shift: robots teaching themselves anything by doing it, powered by the collective knowledge encoded in human video and the physics-aware capabilities of modern AI.

Key Takeaways

- Robots can now learn from internet-scale human video instead of custom robot data.

- The two-step process of "imagining then executing" mirrors human cognition.

- Humanoid morphology is essential for transferring human knowledge to robots.

- Novel task generalization is possible despite narrow training data (98.5% pick-and-place).

- Computational scaling at inference time improves success rates without retraining.