Humanoid’s announcement of KinetIQ marks a definitive end to this era of the "data silo." KinetIQ is a comprehensive AI framework designed for the end-to-end orchestration of humanoid robot fleets across industrial, service, and home applications. By moving beyond hard-coded scripts and into the realm of Physical AI, Humanoid is establishing a unified "brain" capable of inhabiting and controlling multiple different bodies simultaneously.

Here are the five most impactful takeaways from the KinetIQ announcement and why they represent a strategic shift in the future of automation.

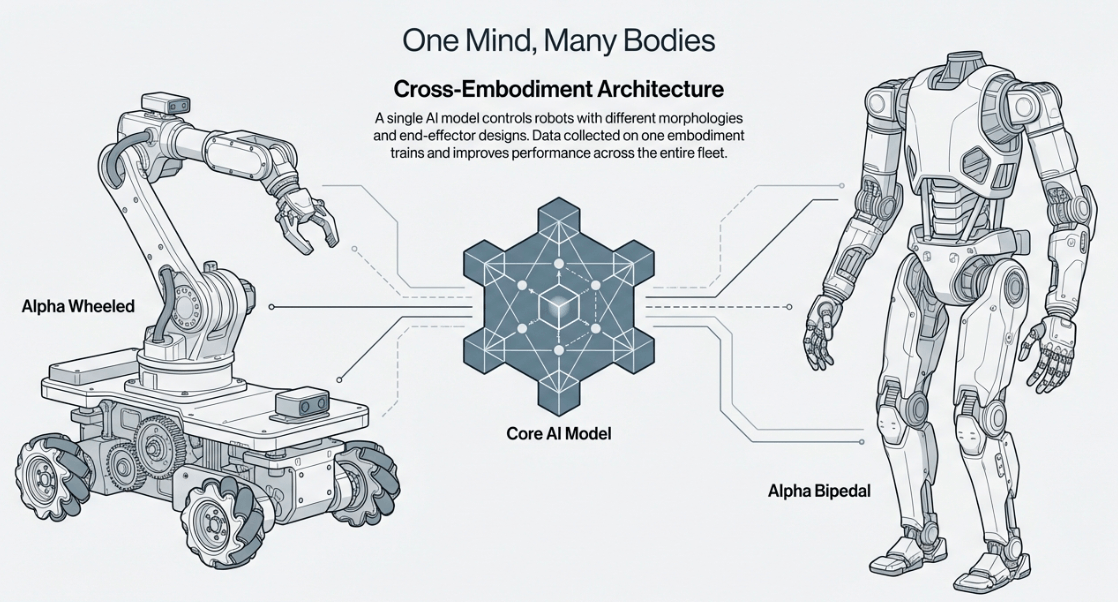

Takeaway 1: The "Cross-Embodiment" Breakthrough

In traditional robotics, a wheeled robot and a bipedal robot were treated as entirely different species, each requiring its own bespoke software. KinetIQ shatters this barrier with cross-embodiment capabilities. A single AI model now controls robots with radically different morphologies—from the Alpha Wheeled platform, optimized for back-of-store grocery picking and logistics, to the Alpha Bipedal model, engineered for the nuanced adaptability required in home service.

Cross-Embodiment-Breakthrough

Analysis: This is the birth of the "Global Brain" for robots. Because data collected on one embodiment helps train the others, every hour a wheeled robot spends moving containers in a retail warehouse contributes to the intelligence of a bipedal assistant in a home. This data synergy drastically accelerates the path to commercial scale, ensuring that specialized learning is never wasted, regardless of the hardware it was born on.

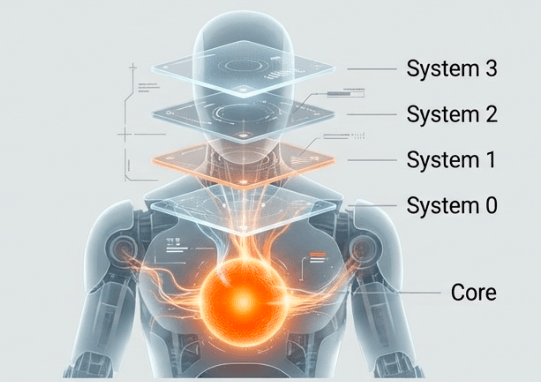

Takeaway 2: A Four-Layered Concept of Time

The KinetIQ architecture is cross-timescale, managing four distinct cognitive layers that operate simultaneously at different frequencies. Crucially, the system follows an agentic pattern where each layer treats the layer below it as a set of tools, prompting them to achieve higher-level goals.

System 3 (Fleet-Level Orchestration):

Operates on a timescale of seconds. It integrates directly with facility management systems to assign tasks, coordinate robot swaps at workstations, and maximize throughput.

System 2 (Robot-Level Reasoning):

Spans seconds to sub-minutes, decomposing fleet goals into specific environmental interactions.

System 1 (Low-Level Execution):

Operates at 5–10Hz, commanding specific body parts to perform tasks like picking, placing, or locomoting.

System 0 (Whole-Body Control):

The "reflex" layer, running at 50Hz to ensure dynamic stability.

A Four-Layered Concept of Time

Analysis: This structure mimics biological intelligence. While System 3 is concerned with logistics and facility-wide uptime, System 0 is calculating the torque required for stability in milliseconds. Notably, System 0 is trained solely in simulation, leveraging over 15,000 hours of reinforcement learning experience to produce a model capable of nearly any movement before it ever touches a physical floor.

Takeaway 3: Robots as Agentic Problem Solvers (System 2)

At the reasoning layer, KinetIQ utilizes an omni-modal language model to observe the environment and interpret high-level instructions. Unlike old-school robots that fail when a box is turned the wrong way, a KinetIQ-powered robot uses visual context to update its plan dynamically.

Analysis: We are seeing a transition from "fixed sequences" to "dynamic reasoning." When a robot identifies a more efficient way to navigate a cluttered aisle or pack a non-standard container, that successful plan can be saved as a new Standard Operating Procedure (SOP). These SOPs are then shared across the entire fleet. Imagine a solution found by a single robot in a Tokyo warehouse being instantly available to a robot in London; that is the power of shared agentic learning.

Takeaway 4: The Intelligence to Ask for Help

One of the most critical "dragons" Humanoid has slain is the problem of robot "stubbornness"—where a machine fails but continues to repeat the error. KinetIQ introduces a feedback loop where System 2 monitors the progress of System 1. If the robot-level agent determines it cannot complete a task, it possesses the self-awareness to flag the exception and request human intervention through the fleet layer.

Assistance is delivered through a high-precision human-in-the-loop mechanism:

- Prompting: A human provides new high-level guidance to the System 2 reasoning agent.

- Teleoperation: A human takes direct control of the joints at the System 1 level for complex, non-standard manipulations.

Analysis: This is a vital safety and efficiency feature for commercial scalability. By allowing a human to "unstick" a robot via a quick prompt or remote session, Humanoid ensures that a single outlier doesn't shut down an entire workflow. It turns human operators into high-level supervisors of an increasingly autonomous fleet.

Takeaway 5: Solving the "Reality Gap" with Prefix Conditioning

To bridge the gap between high-level "thoughts" and physical execution, System 1 uses a Vision-Language-Action (VLA) neural network. However, in asynchronous systems, the "reality" the robot predicted a split-second ago might change by the time the action is executed. To solve this, Humanoid uses prefix conditioning. Think of it like a person starting to speak the next sentence while still finishing the current one; to ensure the speech is fluid and logical, the second half of the thought must be conditioned on exactly what has already left the speaker's mouth. In KinetIQ, every new chunk of action is conditioned on the part of the previous chunk currently being executed.

Analysis: This ensures that the robot’s actions never contradict the unfolding reality of the physical world. Because this is a universal technique applicable to both autoregressive and flow-matching models, KinetIQ is remarkably future-proof. It allows the framework to remain compatible with the next generation of AI models without requiring a total architectural overhaul.

Watch the official video by Humanoid

Conclusion: The Road to Physical AI

By weaving together orchestration, reasoning, execution, and reflex-level control, the KinetIQ framework represents a cohesive leap toward true Physical AI. Humanoid is developing a scalable, shared intelligence that can inhabit any body and solve any task.

As these four cognitive layers begin to work in unison across global fleets, we must ask ourselves: How quickly will our expectations of automation shift when every robot we encounter is learning from the collective experience of thousands of others? The era of the "isolated machine" is over. The era of the scaling, shared robotic mind has begun.